Key Takeaways

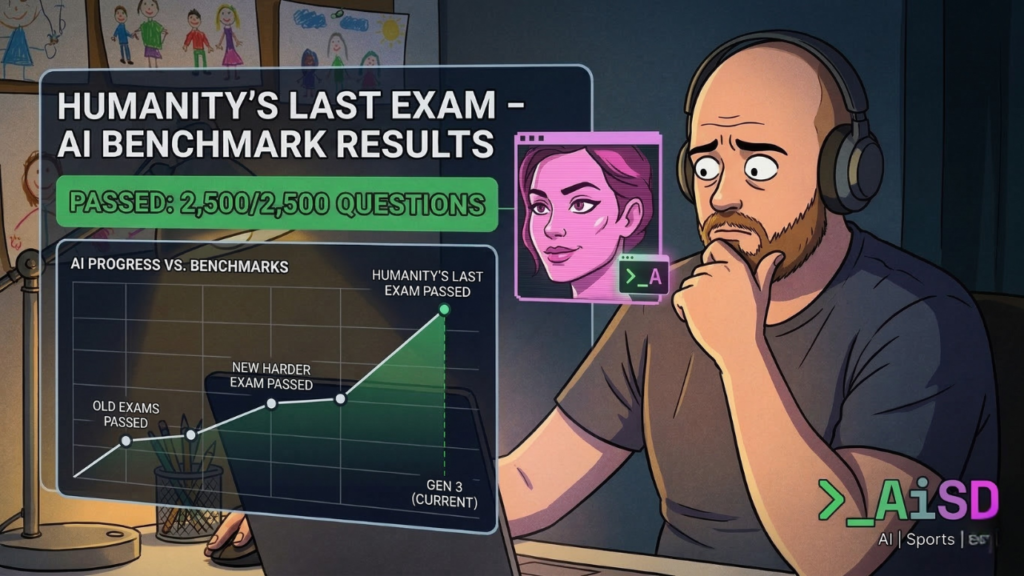

Nearly 1,000 subject matter experts designed an exam called “Humanity’s

Last Exam” to judge how fast AI is improving, because AI kept maxing out every other test. The AI systems

started passing that one too. If you gave up on AI tools 12 months ago

and haven’t looked back: the version you tested is probably several

generations behind what’s available now.

I’ve been loosely following something called “Humanity’s Last Exam” for

a few months — and this week it finally made me stop scrolling and

actually pay attention.

Here’s the short version: almost 1,000 academics, scientists, and

specialists spent months building a 2,500-question exam specifically

because AI systems kept maxing out every benchmark they threw at them.

The questions were deliberately obscure — niche academic fields, edge

cases, problems that were supposed to require genuine understanding

rather than pattern-matching. The kind of thing you’d design if someone

asked you to make a test AI couldn’t pass.

Then the AI systems started passing it too.

If you’re asking how fast is AI improving — that’s your answer. Fast

enough that the people building the measuring sticks can’t keep up.

This isn’t a story about robots taking over. It’s a story about pace —

and why it matters for anyone who tried an AI tool a year ago, found it

underwhelming, and moved on.

How Fast Is AI Improving? Faster Than the Tests Can Measure

A benchmark is just a standardised test for what an AI can and can’t do

— like a school exam designed to find the edge of what a student knows.

When every student scores 100%, the test stops being useful. You build

a harder one.

AI researchers have been stuck in that loop for a while now. Each time

they design something they think will hold, the systems catch up faster

than anticipated. “Humanity’s Last Exam” was the latest attempt. It

didn’t hold as long as they’d hoped.

I find this oddly useful information — not because I care about academic

benchmarks, but because it reframes how I think about the tools I’m

actually using. If the ceiling is rising this fast, the floor is too.

And the floor is where most of us are working.

What This Actually Means for the Tools You Gave Up On

If you tried an AI tool in 2024 and found it unreliable — you might be

right about that version. You might not be right about the current one.

The improvement cycle in AI is faster than anything in consumer

technology since the early years of smartphones. Tools that got your

dates wrong, misread your calendar, or gave you nonsense summaries 18

months ago have gone through multiple significant updates. Some are

genuinely different products now — not incrementally better,

structurally different.

Your word processor works the same as it did three years ago. AI tools

are not on the same curve.

I had this exact thing happen with an email summarisation tool I’d

written off completely in early 2024. Tried it again a few months ago

on a whim. It was night and day. I use it most mornings now.

[CLIFF: swap in your specific tool/example here if you have one —

the specificity is the point]

The Practical Bit (For People With No Time to Experiment)

This doesn’t mean you need to retest every AI tool every month. Your

time isn’t unlimited and your patience for things that don’t work is —

reasonably — low.

It means it’s worth revisiting the specific ones you gave up on. In

particular:

If you tried an AI email summariser and it was wrong more than it was

right — test it again this week.

If you tried a voice assistant that kept misunderstanding you — it’s had

several updates since.

If you watched a demo of an AI tool and thought “that won’t work for me”

— that demo might be a year old.

The floor of what these tools can reliably do is rising. What was a

disappointing experiment in 2024 might now be a genuinely useful daily

habit. Not because all the hype has been justified — it hasn’t — but

because the underlying capability has changed enough that it’s worth

a second look.

One Thing to Try This Week

Pick one AI tool you gave up on in the last 18 months. Give it ten

minutes on something specific from your actual life — not a demo, not

a test scenario. A real school email. A real calendar problem. A real

question you needed answering last Tuesday.

If it still doesn’t work, you’ve confirmed it. Move on. If it does work

now, you’ve recovered something useful from a tool you already know

about, with no new learning curve required.

That’s a reasonable use of ten minutes.

Cliff writes at theaisportsdad.com

about using AI to get your time back — without the hype, and without

needing to understand how any of it works.